FLARE: Focused Leaf-Lesion Awareness via Explicit Relational Modeling for Field Disease Severity Assessment

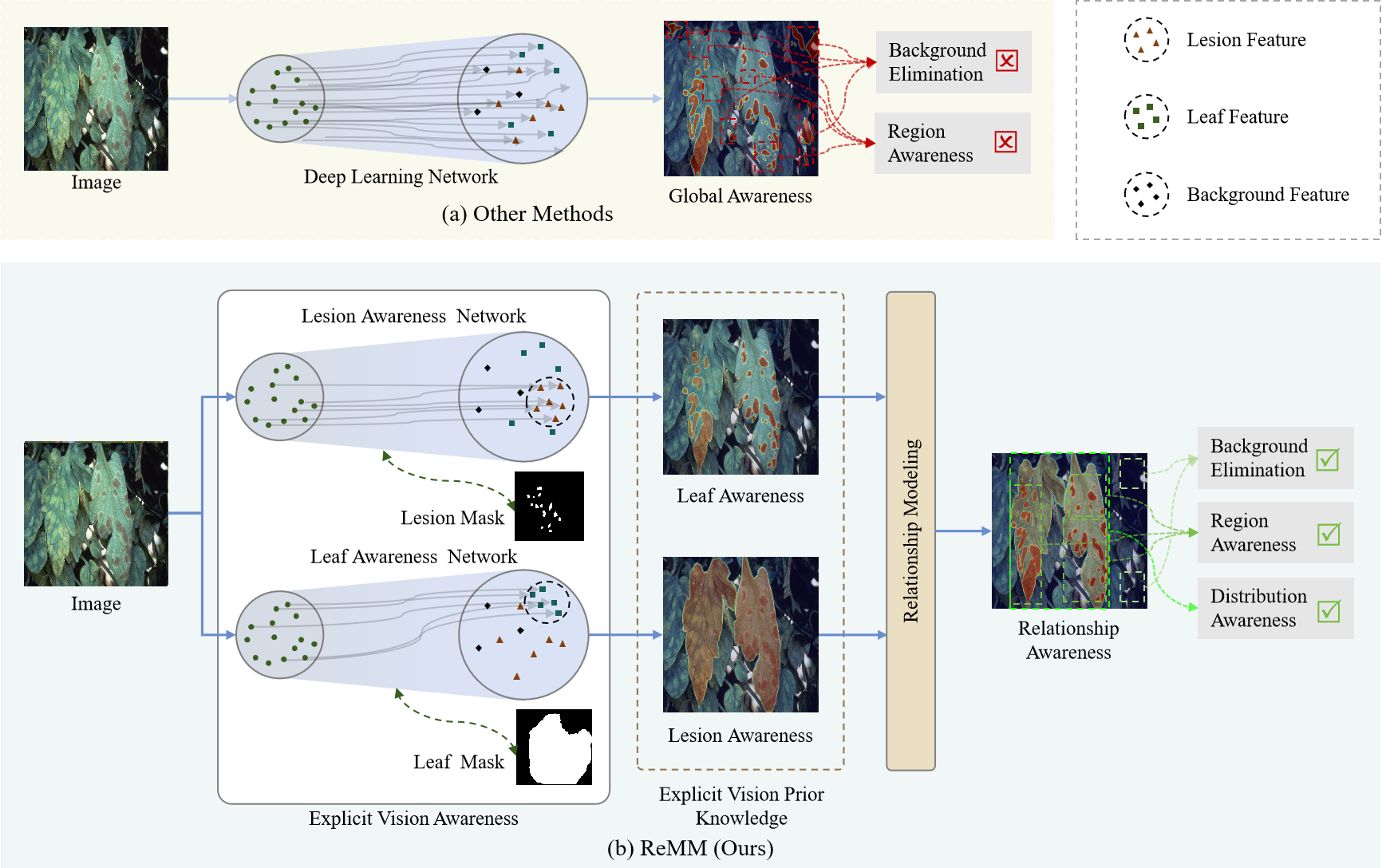

Crops play a fundamental role in ensuring global food security, and their healthy growth is closely tied to national food strategies and social stability. Nevertheless, plant diseases remain one of the most serious threats to agricultural production, leading to yield reductions of up to 30% in major crops and causing global economic losses worth hundreds of billions of dollars. To maintain agricultural productivity, the rational use of pesticides is indispensable; however, improper or excessive application not only lowers efficiency but also contributes to environmental contamination and increased production costs. Assessing the severity of plant diseases enables quantitative evaluation of disease progression and serves as a key foundation for scientific pesticide management, which is vital for stable and sustainable crop yields. Many current methods depend on hybrid perceptual learning frameworks yet fail to distinctly attend to lesion and leaf regions. As a result, extracted features are often intertwined with background information, leading to biased severity estimations, as shown in Figure 1. In contrast, human vision naturally prioritizes lesion areas and their spatial distribution on leaves while disregarding irrelevant background details. Motivated by this mechanism, our method introduces explicit visual awareness guided by lesion and leaf masks, leveraging their prior knowledge to model interrelations and better simulate the spatial distribution of lesions on leaves.

Figure 1. Comparison between existing deep learning methods and our proposed framework. (a) Conventional methods with global lesion awareness tend to blend background, leaf, and lesion features, resulting in limited focus on key regions and weak background suppression. (b) In contrast, our method explicitly incorporates lesion and leaf awareness, enabling effective background filtering and more accurate modeling of lesion distribution across the leaf surface.

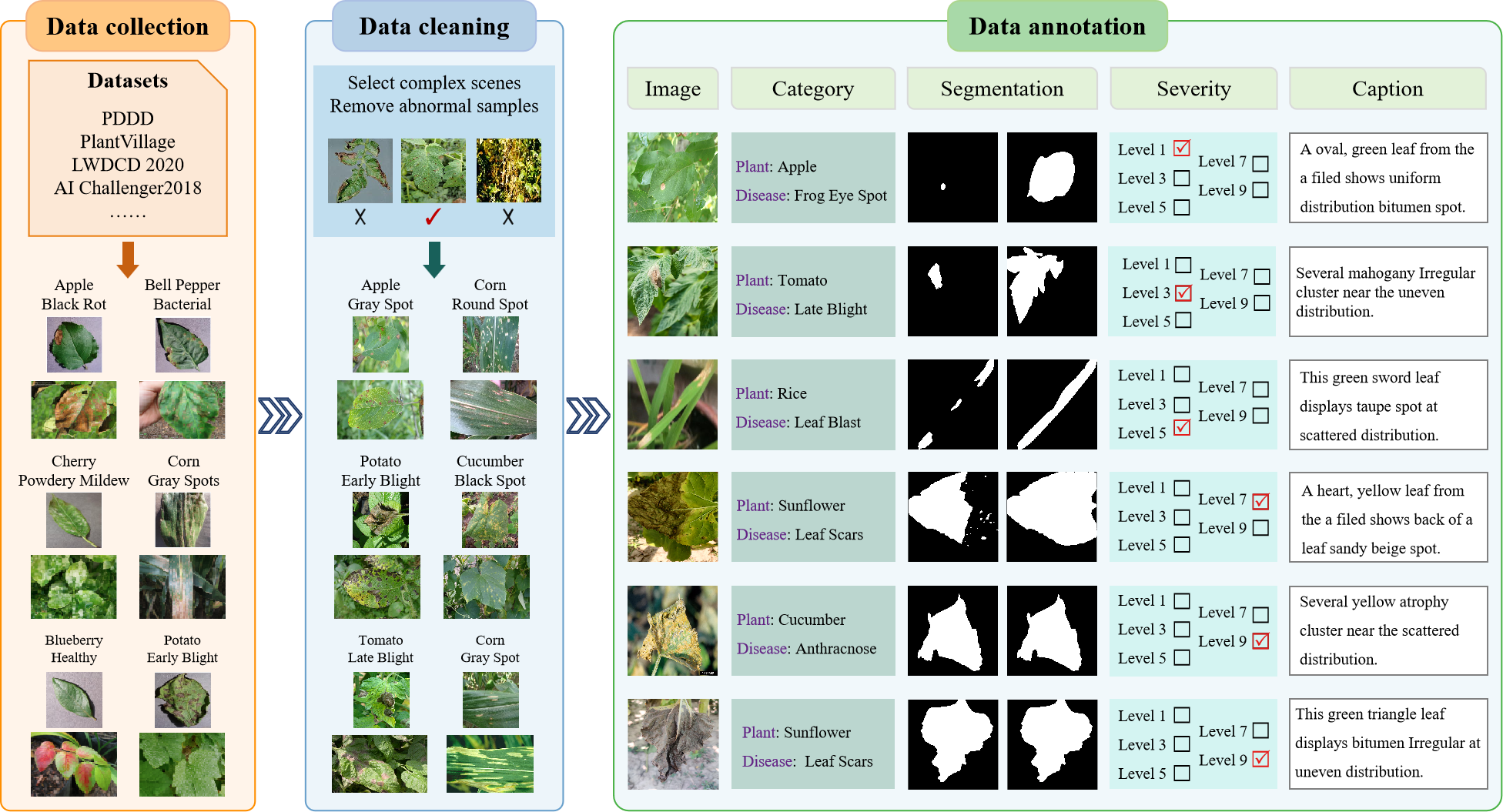

Dataset: Deep learning depends on large and high-quality datasets, yet progress in plant disease research is limited by insufficient annotation types and low scene diversity in existing data. Most current datasets focus only on disease classification, lack fine lesion labeling in complex field environments, and rarely include quantitative severity information. To address these gaps, we built a multi-dimensional dataset specifically designed for real-world field conditions, as shown in Figure 2. It provides annotations for plant and disease categories, leaf and lesion segmentation, severity grading, and image description. The dataset contains 4,301 high-quality images covering 19 plant species and 68 diseases, mainly from crops and fruit-bearing plants. This rich annotation structure supports detailed analysis and diverse downstream applications in intelligent disease diagnosis and management.

Figure 2. Overview of the dataset creation pipeline, comprising data collection, refinement, and annotation.

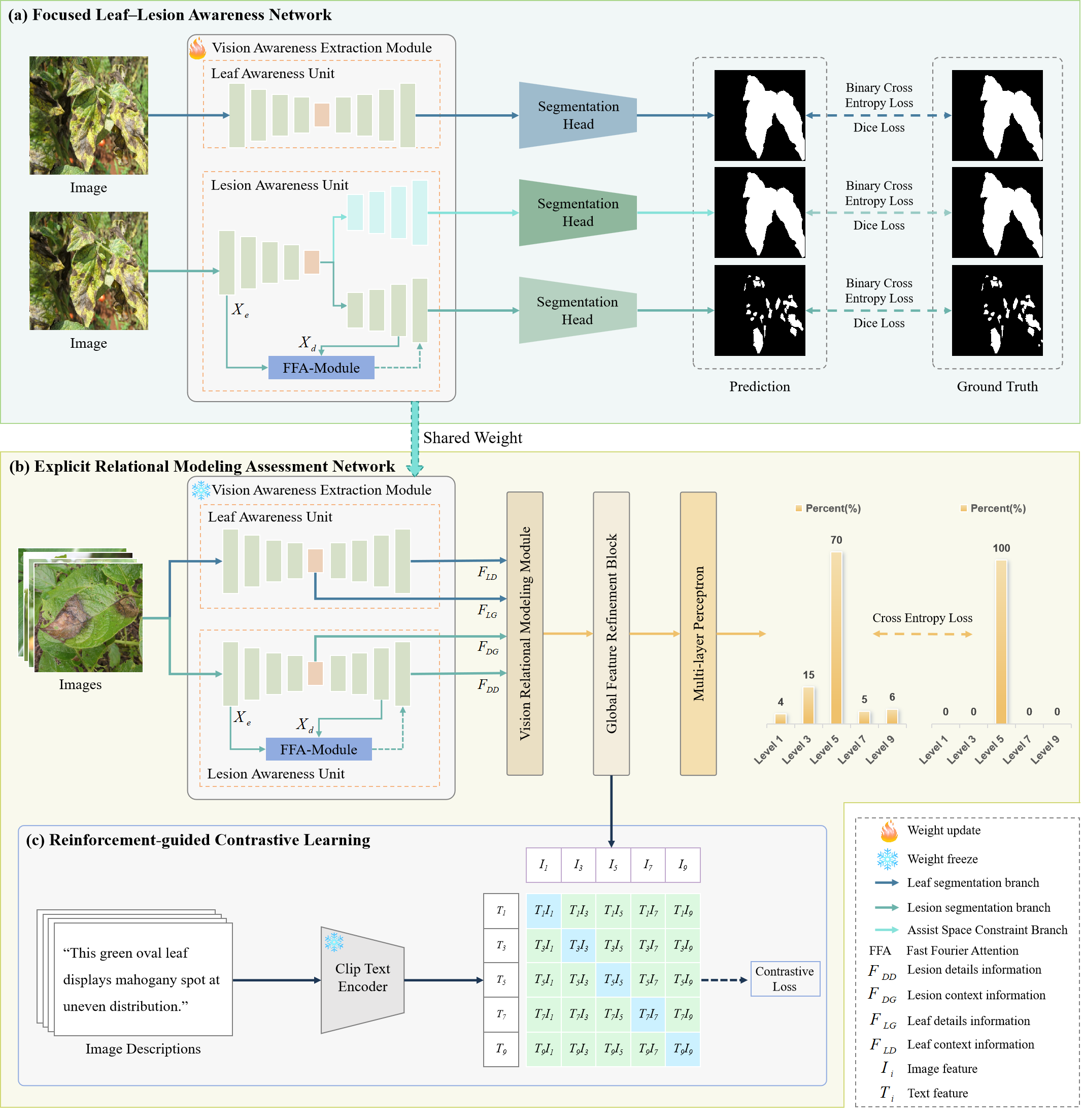

Overview: We introduce FLARE (Focused Leaf-Lesion Awareness via Explicit Relational Modeling) for plant disease severity assessment, as shown in Figure 3. FLARE consists of two core modules: the Focused Leaf-Lesion Awareness Network (FLLA-Net) and the Explicit Relational Modeling Assessment Network (ERMA-Net). FLLA-Net adopts a dual-stream segmentation design to train the Vision Awareness Extraction Module (VAE-Module), which incorporates visual priors to effectively capture both lesion and leaf features. Building upon this, ERMA-Net reuses the pre-trained VAE-Module to independently extract hierarchical representations of leaves and lesions. It then introduces a Vision Relational Modeling Module (VRM-Module) to explicitly learn their spatial and semantic relationships. The extracted features are refined through a global enhancement process and further optimized using a reinforcement-guided contrastive learning strategy to strengthen semantic discrimination in lesion regions. A multi-layer perceptron subsequently predicts disease severity based on the enhanced representations. By jointly optimizing FLLA-Net and ERMA-Net, FLARE offers a robust and interpretable framework for disease severity evaluation, effectively mitigating background interference and handling diverse lesion distributions under complex field conditions.

Figure 3. The overall architecture of FLARE.

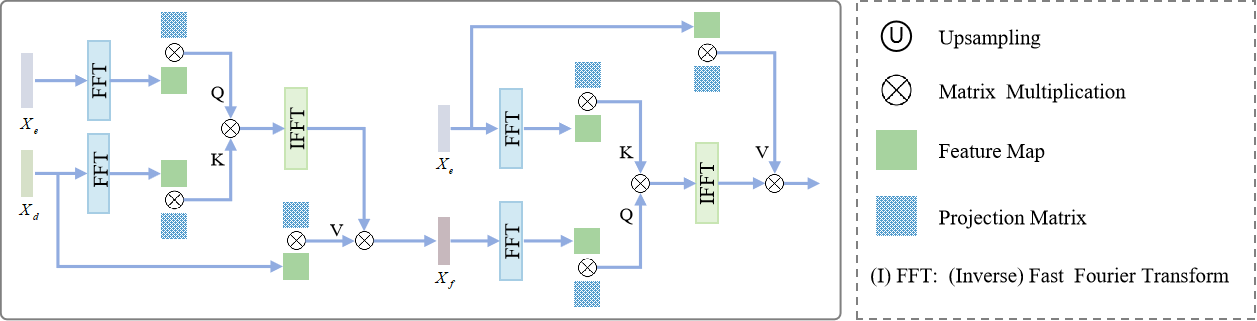

Fast Fourier Attention Module (FFA-Module): Accurate disease severity assessment depends heavily on detailed lesion information, which reflects both the extent and morphological characteristics of infections. To effectively capture these details, we introduce a frequency-domain analysis strategy that separates image information into high-and low-frequency components — where high-frequency elements represent lesion edges and textures, and low-frequency elements describe global structures and backgrounds. Building on this principle, we design a Fast Fourier Attention (FFA) module (as shown in Figure 4), which integrates the Fourier transform with an attention mechanism to enhance lesion perception. The module transforms feature maps into the frequency domain to emphasize fine structural details, then reconstructs and fuses them back into the spatial domain.

Figure 4. Framework of the fast Fourier attention module (FFA-Module).

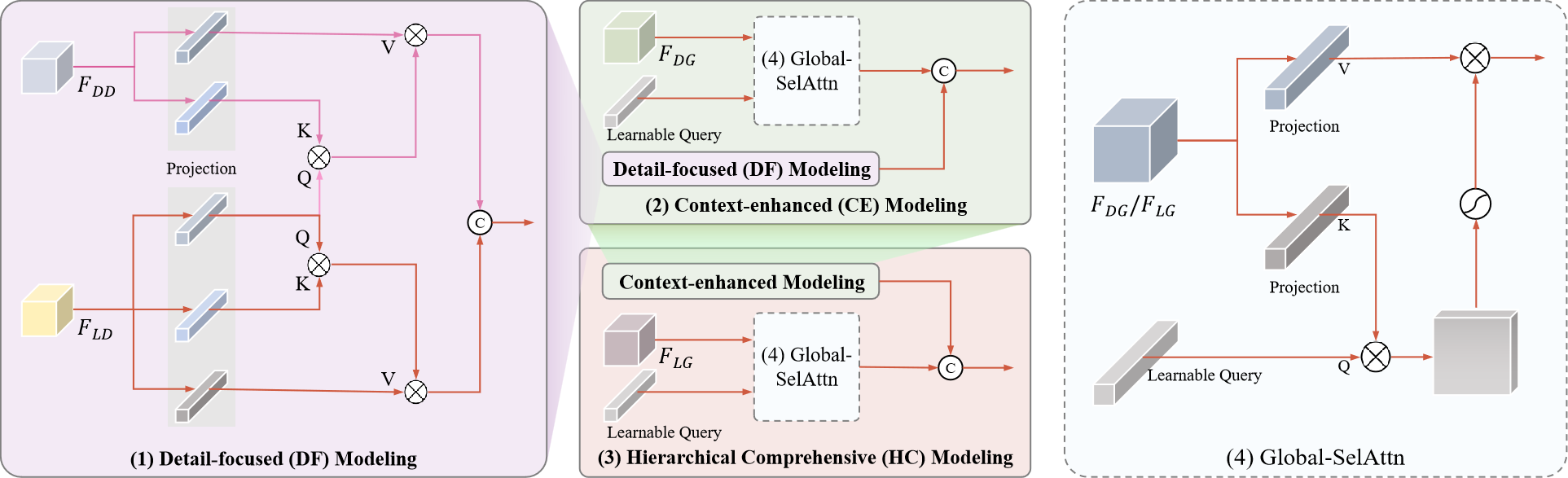

Vision Relational Modeling Module (VRM-Module): To accurately assess plant disease severity, the model must capture fine lesion details while understanding their spatial and semantic relationships with leaves. To achieve this, we design a Vision Relational Modeling (VRM) module that simulates lesion distribution on leaves through multi-level feature interaction, as shown in Figure 5. The VRM module integrates detailed, contextual, and hierarchical information to jointly model the relationship between lesions and leaf structures. By combining local detail perception with global context understanding, it enhances the representation of lesion–leaf dependencies and improves the model’s capacity for precise and robust severity estimation under complex field conditions.

Figure 5. Framework of the vision relational modeling module (VRM-Module).